[FREE CODE INSIDE] How to Run Visual Query Fan-Out Analysis in Screaming Frog Using OpenAI

Google announced a new feature called "visual search fan-out" in their September 2025 updates. It's a technique where AI Mode performs a comprehensive analysis of an image, recognizing subtle details and secondary objects in addition to the primary subjects, and then runs multiple queries in the background.

This is exactly the problem I've been trying to solve with my Visual Search Query Fan-out tool for Screaming Frog. Let me show you what this actually means and why it matters for SEO.

Important Note: It can be quite expensive for thousands of pages, I mean full crawl.

Quick start guide:

- Check your Search Console,

- filter top pages or low performing pages,

- export URLs,

- crawl them in a list.

**Access free Custom JavaScript here: https://github.com/metehan777/screaming-frog-visual-query-fan-out **

What Visual Query Fan-Out Really Is

Think about how you'd describe a photo to someone who can't see it. You wouldn't just say "it's a backpack." You'd mention the color, the style, maybe the laptop sleeve you can see, the water bottle pocket, the brand logo if it's visible. You'd describe the whole context.

That's what visual query fan-out does. Instead of:

- Image → "blue backpack" → match searches for "blue backpack"

It's now:

- Image → analyze everything → generate dozens of relevant queries → match searches for any of those interpretations

Google's new approach helps it understand the full visual context and the nuance of your natural language question to deliver highly relevant visual results. My tool does the same analysis but shows you what queries your images could match if properly optimized.

Check this out: https://blog.google/products/search/search-ai-updates-september-2025/

The Custom JavaScript I Built

I created a custom JavaScript extraction for Screaming Frog that uses OpenAI's Vision API to analyze images and generate comprehensive search queries based on what it sees. It's basically doing what Google's now doing internally - looking at images and understanding all the different ways someone might search for them.

Here's what happens when you run it:

- Find the important images - It skips logos, UI elements, tracking pixels and focuses on product images, content images, galleries

- Comprehensive visual analysis - The AI identifies everything: primary subjects, secondary objects, visible text, colors, materials, styles, implied use cases

- Generate search queries - For each element and combination of elements, it creates queries real people might actually search

Real Example: The Coffee Maker Test

I ran this on an e-commerce site selling kitchen appliances. Here's what happened with one "simple" coffee maker image:

Traditional SEO approach:

- Alt text: "12-cup coffee maker"

- Maybe mentioned in product description: "stainless steel," "programmable"

What the visual fan-out analysis found:

Objects Detected:

• Automatic drip coffee maker with thermal carafe [Kitchen Appliance]

Attributes:

- Material: brushed stainless steel with black accents

- Capacity: 12-cup (visual estimate from size)

- Features: LED display panel, multiple buttons (programmable)

- Style: modern/professional

- Carafe type: thermal (double-walled visible)

Generated Queries:

1. "programmable coffee maker with thermal carafe"

2. "stainless steel coffee machine 12 cup"

3. "coffee maker that keeps coffee hot without burner"

4. "office coffee maker for conference room"

5. "coffee machine with timer brushed steel"

6. "thermal pot coffee maker no hot plate"

7. "best coffee maker doesn't burn coffee"

See the difference? The AI picked up that it's a thermal carafe (no hot plate visible), that it's programmable (multiple buttons and display), and even suggested use-case queries like "office coffee maker" based on the professional styling.

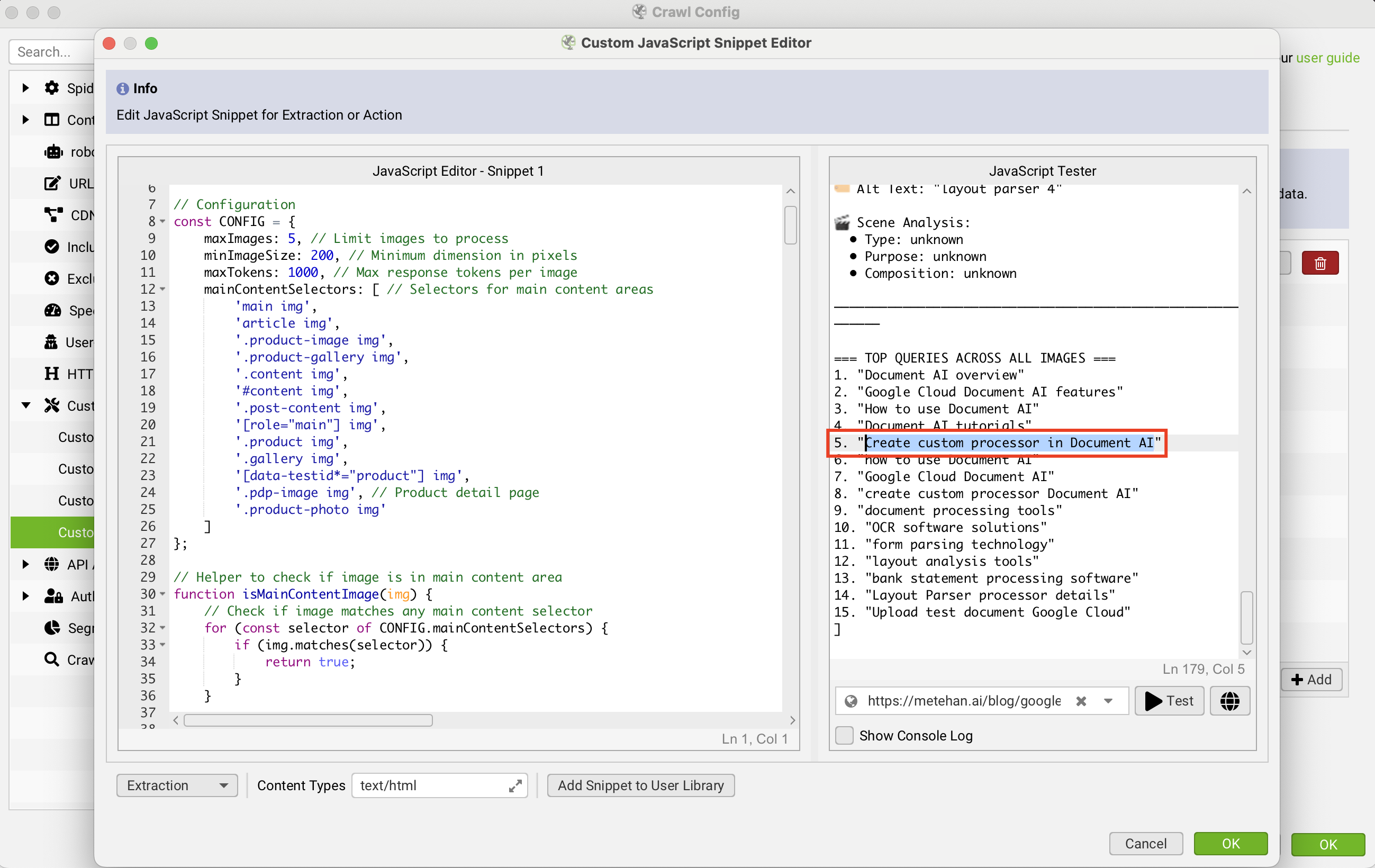

Real & Fast Example 2: A Niche Blog Post

I tested with my " Document AI Layout Parser " blog post. See the output as "create custom processor in Document AI"

Then I run this query in Google Images and boom!

Why This Matters Right Now

Google's AI Mode can now show you rich visuals that match the vibe you're looking for, with the ability to continuously refine your search in the way that's most natural for you. This means Google is understanding images at a conceptual level, not just matching keywords.

If your images contain valuable visual information that's not in your text, you're invisible to these new search capabilities.

The Shopping Game-Changer

For shopping, you can simply describe what you're looking for - like the way you'd talk to a friend - without having to sort through filters. Google gives the example of searching for "barrel jeans that aren't too baggy" and getting intelligent visual results.

This is huge for e-commerce. This snippet shows you all these conversational queries your products could match:

Traditional query: "women's jeans size 28"

Visual fan-out queries from analyzing product images:

- "jeans that make legs look longer"

- "dark wash jeans no distressing professional"

- "comfortable jeans for all day sitting"

- "jeans like Everlane but cheaper"

- "minimal stitching clean look denim"

If Google's visual analysis sees these attributes in your images but you haven't mentioned them in text, you're missing out.

What I Discovered Running This Tool

After analyzing hundreds of e-commerce sites, patterns emerged:

1. Color Blindness

Sites say "blue" when the image clearly shows "dusty slate blue" or "french navy." The specific shade matters for conversational searches.

2. Missing Context Clues

A desk photo with a laptop, coffee mug, and plant suggests "work from home setup" - but nobody's optimizing for that query even though their image perfectly matches it.

3. Style Attributes

Images clearly showing "minimalist," "industrial," or "cottagecore" aesthetics, but these valuable style descriptors appear nowhere in the text.

4. Unmentioned Features

Visible features like "reinforced stitching," "hidden pockets," or "adjustable straps" that customers search for but aren't in product descriptions.

How to Use This Intelligence

Immediate Actions

- Run the visual analysis - Use my tool or build your own to see what queries your images could match

- Close the gaps - Add those missing visual attributes to your product descriptions and surrounding content

- Write better alt text - Not just "blue shirt" but "navy blue oxford cloth button-down shirt with chest pocket"

- Create supporting content - Turn those query suggestions into FAQ sections, buying guides, or blog posts

Strategic Thinking

Google mentions understanding subtle details and secondary objects in addition to the primary subjects. This means you need to think about:

- Scene composition - What's in the background? What does the overall composition suggest?

- Implied use cases - What activities or situations does the image suggest?

- Emotional resonance - What "vibe" or feeling does the image convey?

The Technical Setup

The tool uses two scripts:

- Lite version: Quick analysis, summary of findings, top queries

- Full version: Detailed per-image breakdown, all queries grouped by type

Configuration options:

const CONFIG = {

maxImages: 5, // Don't blow through API credits

minImageSize: 200, // Skip thumbnails

maxTokens: 1000, // Response length per image

mainContentSelectors: [ // Customize for your site structure

'main img',

'.product-image img',

// ... your selectors

]

};

What This Means for SEO

We're entering an era where visual search isn't a separate thing anymore. Google's visual search fan-out allows for deeper understanding of precisely what's in an image, and it's integrated directly into regular search.

Your images aren't just images anymore. They're collections of searchable concepts, attributes, and contexts. The question is: are you optimized for what Google's seeing?

The Opportunity Everyone's Missing

Most sites have hundreds or thousands of images that are basically invisible to these new visual understanding capabilities. They show valuable information that's never mentioned in text.

Running my visual query fan-out tool reveals this hidden potential. Every image becomes a map of missed opportunities - queries you could rank for if Google understood what it was looking at had proper textual context.

Try It Yourself

The code is open source on GitHub. You need:

- Screaming Frog SEO Spider

- An OpenAI API key

- About 5 minutes to set it up

Even if you just run it on your top 10 pages, you'll discover optimization opportunities you never knew existed.

**Access free Custom JavaScript here: https://github.com/metehan777/screaming-frog-visual-query-fan-out **``

The Bottom Line

Google's investing heavily in visual understanding. Their visual search fan-out technique runs multiple queries in the background based on comprehensive image analysis.

The sites that win will be the ones that understand their visual content as deeply as Google's AI does. This tool gives you that understanding.

Because in a world where people search conversationally and Google understands images comprehensively, the gap between what's visible and what's described is where opportunity lives.

P.S. - The tool costs fractions of a cent per image to run. The insights it provides? Those are worth significantly more.

Get new research on AI search, SEO experiments, and LLM visibility delivered to your inbox.

Powered by Substack · No spam · Unsubscribe anytime

-min.png)